I'm a UX designer, not a software engineer. But last year, I had an idea that wouldn't leave me alone - what if you could listen to your Twitter feed instead of doom-scrolling through it? So I did something I never thought I'd do: I built a Chrome extension. Here's how I pulled it off without writing a single line of code myself.

The Design-First Approach

Before touching any code, I spent weeks designing Xeder. I sketched wireframes, built interactive prototypes in Figma, and tested the flows on friends. The key was thinking like a product designer, not a developer. What would make this simple? What would feel natural? What could I actually pull off?

I designed every screen, every interaction, every edge case. The pause button here, the skip button there, the volume control. I mapped out the entire audio player UI and thought through how voice selection would work. This design clarity became invaluable when it was time to bring it to life - I had a complete blueprint.

I also documented my assumptions about the technical architecture. I knew Chrome extensions had limitations, so I researched what was possible and documented constraints in my design. This meant when I started working with AI, I wasn't flying blind about what was actually feasible.

Working with Claude: The Collaboration Model

Here's where the magic happened. I used Claude AI as my co-builder. But it wasn't about just asking for code - it was a real collaboration.

I'd start by sharing my design specifications, explaining what each component needed to do. Claude would ask clarifying questions about browser APIs, performance constraints, and user flows. We'd iterate together. I'd describe the interaction: "When the user clicks play, the extension should start reading tweets aloud." Claude would explain the technical approach and I'd either approve it or ask for alternatives.

The best part? I could actually understand the code Claude wrote. Being a designer, I could read the logic and reason about it. When something felt off, I could push back: "That won't work because the feed loads dynamically" - and we'd refine the approach.

This wasn't me copy-pasting code and praying. It was building something together.

The MV3 Audio Architecture Challenge

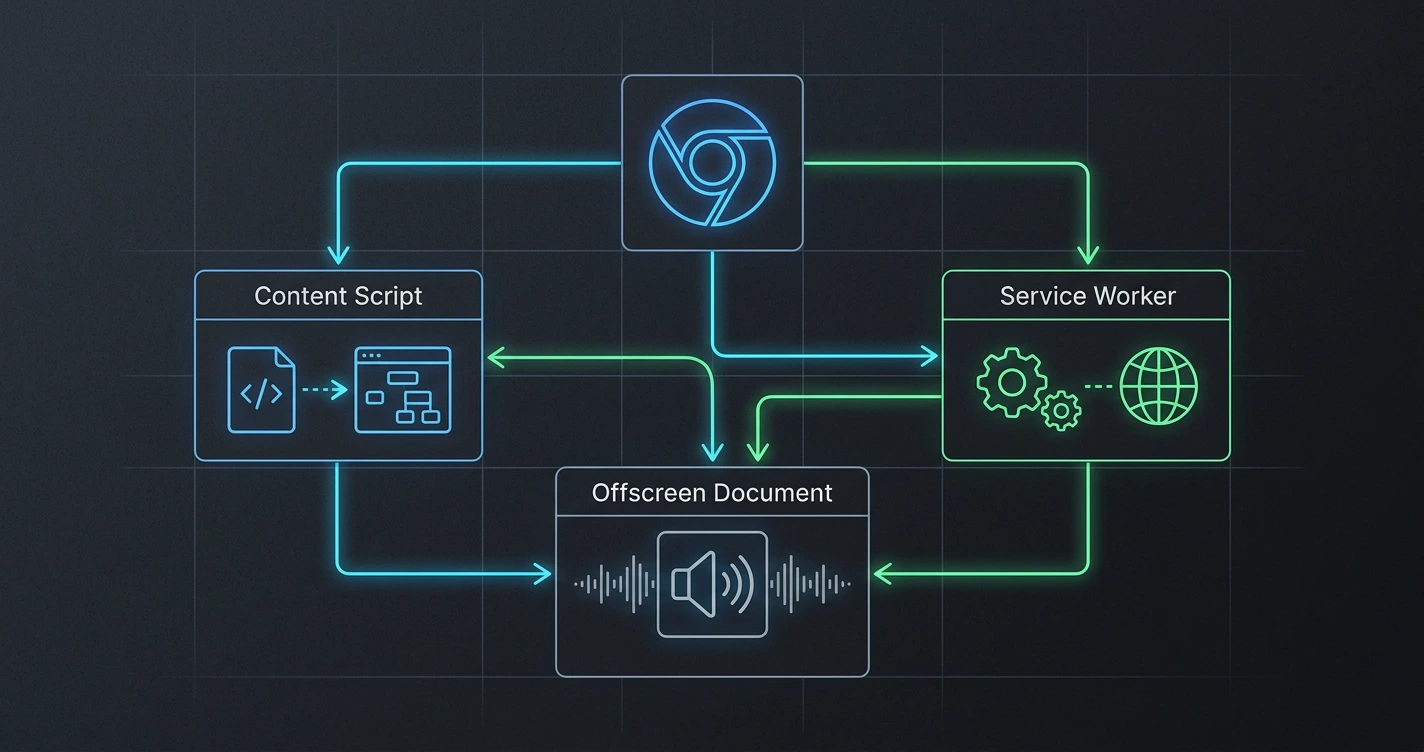

The biggest technical hurdle we faced: Chrome's Manifest V3 (MV3) doesn't let service workers play audio directly. This is a real problem when you're trying to build an audio player in an extension.

We solved it with offscreen documents - basically, a hidden document running in the background that handles audio playback while the service worker controls everything else. It sounds hacky, but it's actually Google's recommended pattern for this exact problem.

Here's the architecture: The service worker receives the text from tweets, sends it to the offscreen document, which converts it to speech and plays it. The service worker handles UI updates, pause states, and skip commands. It's elegant in its own way - each component has one job.

Understanding this problem and solution taught me something important: constraints breed creativity. We didn't work around MV3 limitations - we found the pattern that worked within them. It's actually more robust because of it.

Lessons I Learned

Design clarity saves development time. Because I was crystal clear about what I wanted, Claude could build exactly that. No assumptions, no pivots needed. The design spec became the source of truth.

Understanding beats copying. I made sure to understand the code Claude wrote. Not as a programmer - as someone responsible for the product. This meant I could debug issues, explain them to users, and make informed decisions about changes.

AI is a thought partner, not a magic button. The best outcomes came when I challenged Claude's first approach or asked "what about X?" and we explored options together. It felt less like outsourcing and more like having the best developer friend who's always available.

Constraints are real. I learned the actual limitations of browser extensions. Audio in MV3 is one. Cross-origin access is another. Knowing these constraints meant designing around them, not against them. This made Xeder genuinely better.

Why This Matters

I'm telling you this because I think the future of building is collaborative. Not every great product needs to be built by someone with a Computer Science degree. Design thinking matters. Product thinking matters. Understanding what users need matters. And now, AI can handle the implementation details if you can articulate what you want.

If you have a product idea and you're good at design or product thinking, you have no excuse not to build it. The tools exist. Find a thinking partner - human or AI - and figure it out together.

Xeder exists because I believed the idea was good enough to spend weeks on design before writing any code. I'd recommend the same approach to anyone. Design first, code second. Collaborate third. Launch fourth. (: